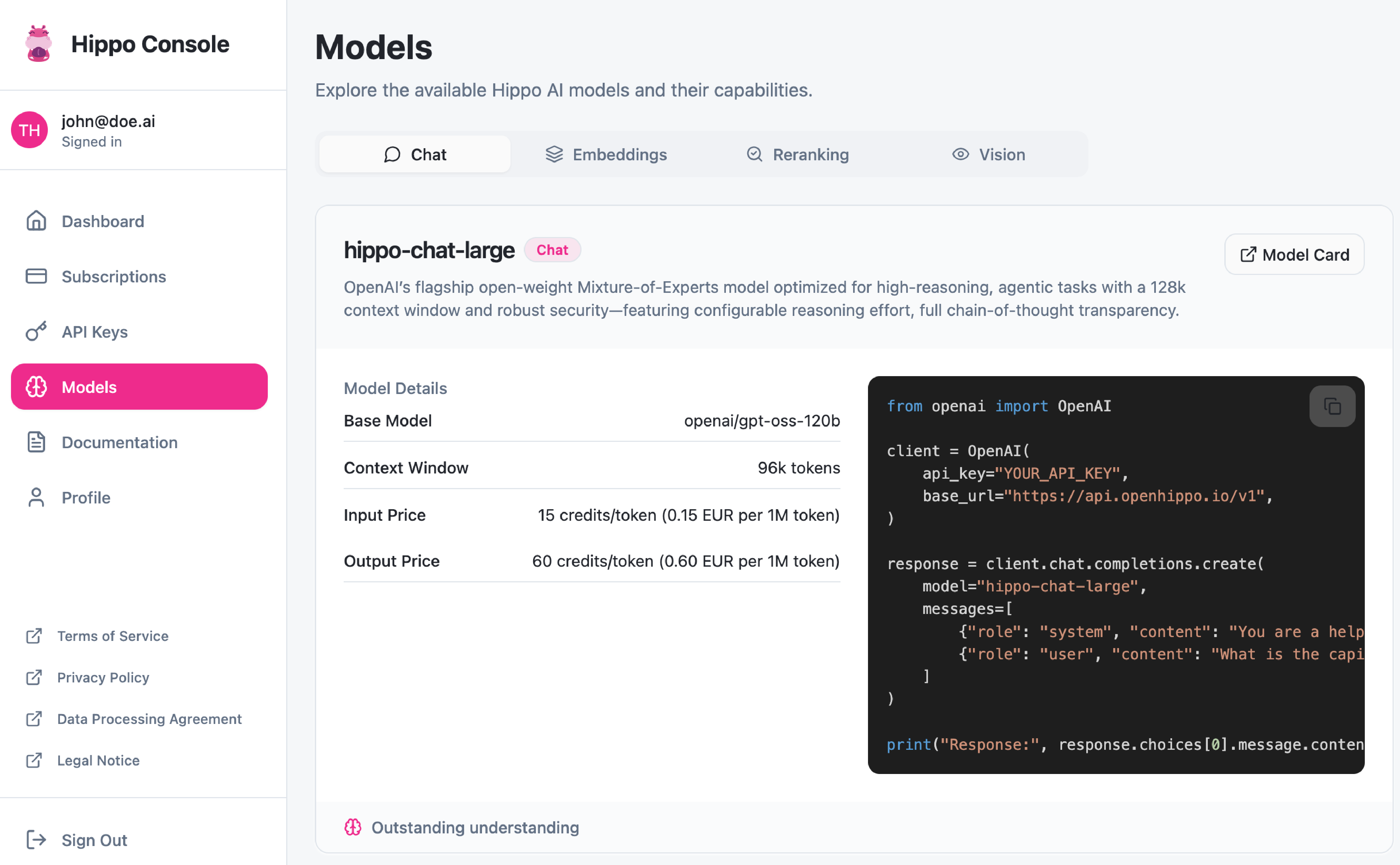

Our Services

Language

Sovereign AI Infrastructure

Today your AI runs on vendor infrastructure — metered, proprietary, hard to exit. Open-source technology changes that. We standardise your AI on open interfaces that run anywhere: on any cloud, on our managed service, or on your own hardware. You start where you are and go as far as you want — step by step, always in control.

What's the problem?

Locked in. Billed by the token.

Every request to OpenAI, Anthropic, or Azure AI costs money you can't forecast. As usage grows, so does the bill — and the vendor sets the price. Switching sounds easy until you see the migration cost.

The real lock-in isn't the contract — it's the API. Your applications are built around proprietary interfaces that only work with one provider. Moving means rewriting. Most organisations stay because leaving is too expensive — exactly as designed.

Open-source AI breaks this model entirely. The same models, the same interfaces, run on any infrastructure. Once your applications speak open standards, you can switch providers without touching a line of code — and move on-premises the moment fixed costs make more sense than variable billing.

How does the journey look?

From lock-in to full control.

Four stages, your pace. You decide how far you go — we support every step.

Assess your current lock-in

We map your AI usage, costs, and how deeply your applications depend on proprietary APIs. You get a clear picture of what it would take to move — and what you stand to save.

Standardise on open interfaces

We migrate your applications to an open-source-compatible API layer. Everything keeps working exactly as before — but now you're free to run on any provider, our managed service, or your own hardware.

Reduce costs — switch providers or go on-premises

With open interfaces, you can move to the most cost-effective provider at any time — or go fully on-premises with fixed monthly costs. We support both paths and everything in between.

Stay in control — as long as you need us

We stay on for model updates, scaling, and new use cases. When you're ready to run independently, we hand over fully documented infrastructure your team can operate on their own.

What do you get?

Measurable results, not promises.

Concrete outcomes your organisation can track from week one.

Does it work in practice?

"We cut the AI bill by 60% — and the system was live in four weeks."

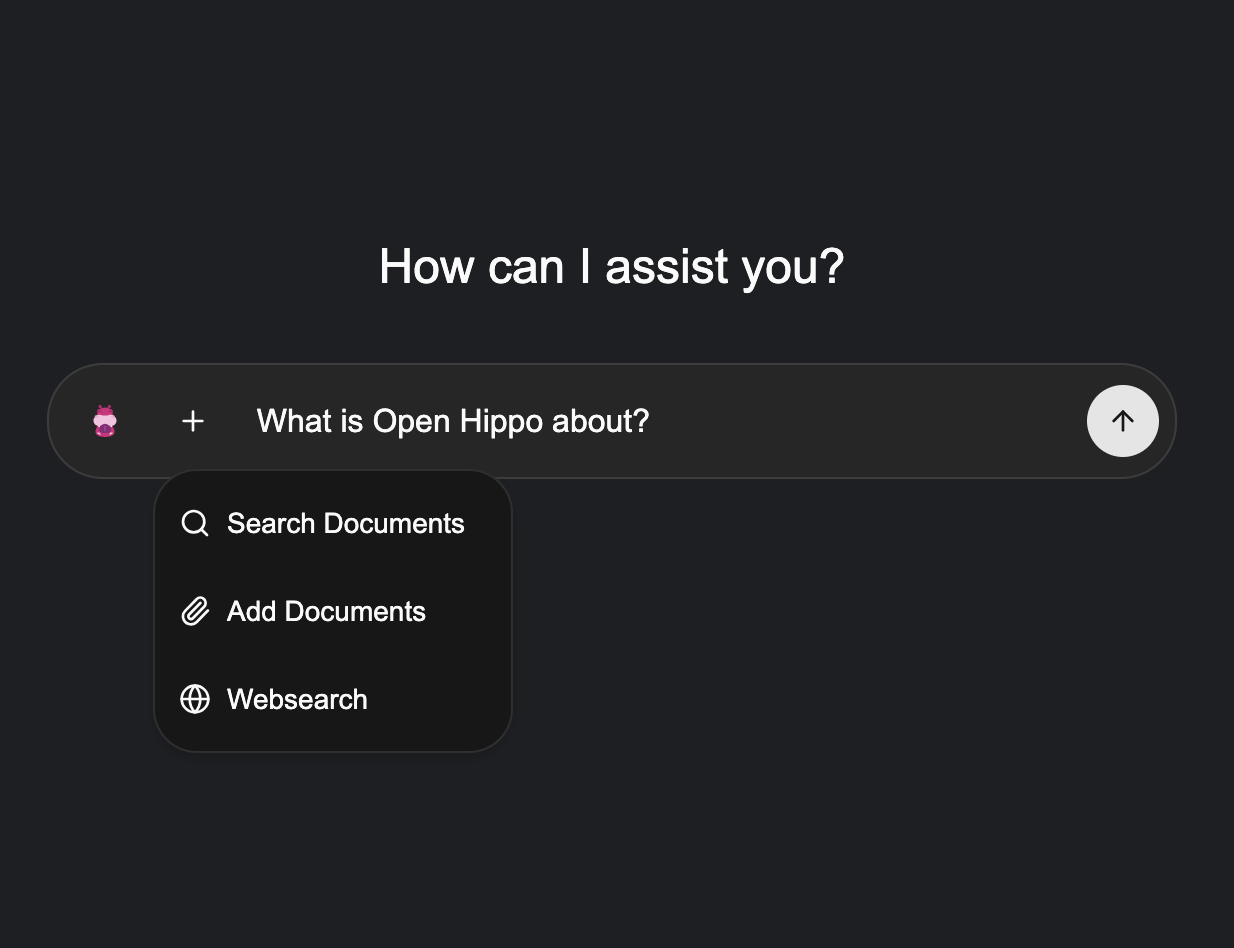

What's in the stack?

Open-source. Yours to keep.

Every component runs on open standards — auditable, portable, and independent of any single vendor.

vLLM

The performance backbone proven at scale by the world's major cloud providers — the same engine powers our managed service and your on-premises deployment.

Docling

IBM's open-source document intelligence engine — extracts structured data from PDFs, tables, and scanned documents so your AI can reason over real business content.

Hugging Face

The world's largest open-source model repository — thousands of production-ready models, one trusted source. No proprietary model dependency, ever.

LiteLLM

The portability layer that makes switching possible — compatible with every major AI provider, so your applications are never tied to one.

Got questions?

Common questions about sovereign AI.

Straight answers — no jargon, no pitch.

Do we have to commit to on-premises right away?

No. We start with open-source standardisation in the cloud — that alone reduces costs and removes lock-in. On-premises is an option, not a requirement. You decide how far you go and when. Some clients stop at cloud portability; others eventually move fully on-premises. Both are valid outcomes.

What does the open-source stack mean for us practically?

Your applications get migrated to open, standard interfaces. You're no longer tied to one vendor's proprietary API. The same code runs on our managed service, any cloud provider, or your own hardware — with no rewrites. The open-source stack is what makes all future flexibility possible.

How quickly can we reduce our AI costs?

Typically within the first month after standardisation. Once your applications run on open interfaces, you can switch to the most cost-effective provider immediately — no migration required. For clients who go on-premises, full payback usually occurs within six to twelve months.

Where does our data actually live?

Where you decide — and that depends on which stage you're at. In the cloud phase: your data stays within your existing cloud account on Azure, AWS, or GCP. We standardise your AI on open interfaces, but the infrastructure remains yours — your tenant, your region, your compliance controls. In the managed phase: in our certified green data center in Augsburg — your own rack, connected via dedicated 100 Gbit/s VPN, GDPR-compliant. On-premises: on your own hardware, in your own building. At every stage, your data never crosses a boundary without your explicit decision.

How much does it cost — including on-premises hardware?

The journey starts with an audit from €4,000 — that alone identifies your lock-in and can reduce cloud costs immediately. Standardisation is billed at a project rate on top of that. On-premises hardware for SMEs starts at around €40,000 with a one-time setup fee of €10,000. We spec everything to your actual workload — no over-provisioning, no surprises.

What happens if an open-source project we use gets discontinued?

We only select projects backed by major organisations — companies with multi-billion-dollar businesses running on them. vLLM is adopted by every major cloud provider. Kubernetes is maintained by Google and the CNCF. These are not hobby projects. And because everything is open source, even in the unlikely case a project sunsets, the code is yours and the community carries on.

Let's map your path to independence.

30 minutes, no pitch deck. We'll show you exactly where your current lock-in is and what it would take to remove it — step by step.